Tech talk: How Tesla makes cars more human

Autonomous cars must interpret and react to situations as a human driver would. Here's how that will work

Never mind when, can self-driving cars ever even work at all? That’s probably the question in the minds of most people. But to work, fully autonomous cars will require the invention of a machine that has the cognitive abilities of a human.

The building block of a human nervous system is a neuron and millions of them form a neural network in the body’s central nervous system. To make autonomous cars a reality, computer scientists need to create artificial neural networks (ANNs) that can do the same job as a human’s biological neural network.

So assuming that really is achievable, the other thing an autonomous car need is the ability to see, and this is where opinions in the industry are split. Until recently, conventional wisdom had it that as well as the cameras, radars and ultrasonic sensors, cars already have for cruise control and advanced driver assistance systems, lidar (light detection and ranging) is essential. Lidar is like high-definition radar, using laser light instead of radio waves to scan a scene and create an accurate HD image of it.

One stumbling block has been the high cost of lidar sensors, which only two years ago cost more than £60,000. Lower-cost versions on the way should bring the price down to around £4000 but that’s still a lot for a single component. Not everyone believes lidar is even necessary or desirable, though, and both Tesla and research scientists at Cornell University have independently arrived at that conclusion.

Cornell found that processing by artificially intelligent (AI) computers can distort camera images viewed from the front. But by changing the perspective in the software to more of a bird’s-eye view, scientists were able to achieve a similar positioning accuracy to lidar using stereo cameras costing a few pounds, placed either side of the windscreen.

Tesla reasons that no human is equipped with laser projectors for eyes and that the secret lies in better understanding the way neural networks identify objects and how to teach them. Whereas a human can identify an object from a single image at a glance, what the computer sees is a matrix of numbers identifying the location and brightness of each pixel in an image.

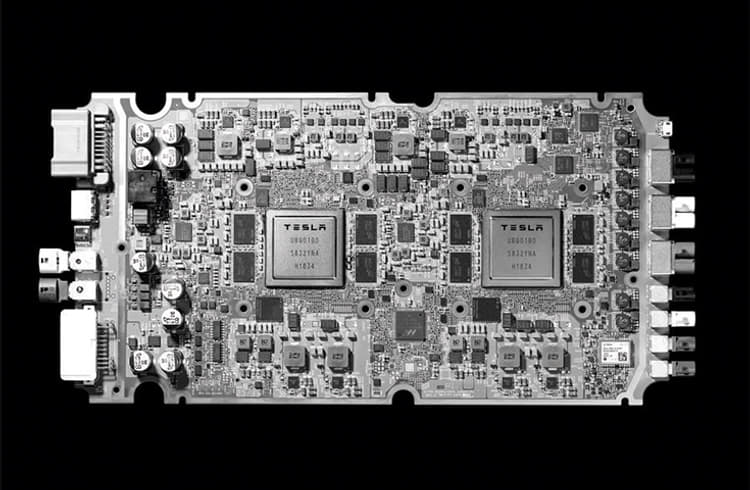

Because of that, the neural network needs thousands of images to learn the identity of an object, each one labelled to identify it in any situation. Tesla says no chip has yet been produced specifically with neural networking and autonomous driving in mind, so it has spent the past three years designing one. The new computer can be retro-fitted and has been incorporated in new Teslas since March 2019. The Tesla fleet is already gathering the hundreds of thousands of images needed to train the neural network ‘brains’ in ‘shadow mode’ but without autonomous functions being turned on at this stage. Tesla boss Elon Musk expects to have a complete suite of self-driving software features installed in its cars this year and working robotaxis under test in 2020.

50 trillion operations per second

Tesla boffins say a self-driving car needs a neural networking computer capable of performing a minimum of 50 trillion operations per second (50 TOPS). By comparison, a human brain can manage about 10 TOPS. The new Tesla computer consumes no more than 100W of power so it could be retrofitted. Bosch and NVIDIA are developing a similar ‘brain’ for autonomous cars ready for 2020. It’s called the Bosch AI self-driving computer.

Also read:

Tech talk: Why Volkswagen is bringing back manual gearboxes

Tech talk: The choice between regular and super unleaded fuel

Tech Talk: How valves are taking back control of our engines

Tech talk: How manufacturers are preserving pistons

Tech talk: Why hydrogen could be an easy cell

Tech talk: Hybridising combustion engines with the 'biscuit tin' motor

Tech talk: Why aluminium chassis makes good sense for EVs

Tech Talk: How Volkswagen's DSG gearbox predicted the future?

RELATED ARTICLES

How Tata Motors’ Lucknow Plant Evolved from the 407 Era to a Million-Vehicle Milestone

Tata Motors’ Lucknow plant has crossed the 1 million production milestone, marking over three decades of evolution since...

Harvesting Growth: GST Cut, Bumper Monsoon Fuel Record Tractor Sales in FY26

A strong monsoon, election-year cash and a surprise tax cut combined to push FY26 wholesale dispatches to an all-time hi...

FY26: When India’s EV Market Found Scale

India's electric vehicle market crossed 2.45 million units in FY26, with every major segment posting record sales and co...

Follow us

Follow us

27 Aug 2019

27 Aug 2019

18201 Views

18201 Views

Shahkar Abidi

Shahkar Abidi

Anurag Chaturvedi

Anurag Chaturvedi

Prerna Lidhoo

Prerna Lidhoo