How modeling and simulation help understand capacity fade

Capacity loss in Li-ion batteries occurs when a battery starts to age with time and use, leading to unacceptable low performance. Modeling and simulation can provide valuable insights to better understand the phenomenon.

Over the last few years, lithium ion (Li-ion) batteries have become increasingly popular, driven by features such as higher energy density and voltage capacityandlower self-discharge rate compared to other rechargeable batteries. They are used in a range of devices today — from cellphones and laptops to cars, and everything in between.

However, for Li-ion batteries to power future transportation and energy storage solutions, original equipment manufacturers (OEMs) need to address the issue of capacity fade.

Simply put, capacity fade (or capacity loss) in Li-ion batteries occurs when a battery starts to age with time and use and cannot hold the charge it once could, eventually leading to unacceptable low performance. Capacity fade is especially important in automotive applications, where the battery cost is high and where customers expect a service life comparable to combustion engines.

The US Advanced Battery Council has set a goal of an electric vehicle (EV) battery lifetime of 15 years and upto 1000 cycles. However, to realise this goal, the ability to understand and accurately predict capacity fade becomes crucial for EV manufacturers. This is easier said than done, since Li-ion batteries are complex systems that comprise several interlinked physical and chemical phenomena. Modeling and simulation can provide the understanding and help generate the new ideas required to meet these goals.

Why capacity fade occurs

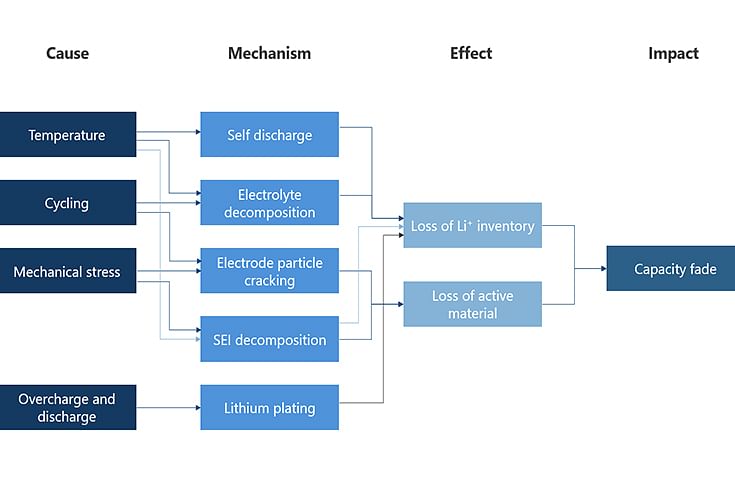

Capacity fade in a Li-ion battery can occur due to various phenomena. One of the biggest contributors to ageing is too high operating temperature. Cycling, overcharge, and discharge as well as mechanical stresses in a battery also contribute to aging.

Figure 1: The self-discharge rates of a cell vary significantly with conditions such as the operating temperature and state of charge (SOC).

What contributes to capacity fade in a battery?

There are two ways in which battery aging can occur: calendar ageing and cycle ageing. The former occurs due to battery storage for longer periods of time. The self-discharge rates of a cell vary significantly with conditions such as the operating temperature and state of charge (SOC). To illustrate this, some researchers performing calendar life studies have reported that cell life can reduce to half when the operating temperature is maintained at 35deg C instead of 25deg C.

One of the major contributors to cell degradation is the solid electrolyte interphase (SEI) layer growth. The SEI layer is desirable, since it protects the anode from further degradation, thus ensuring stable performance. However, repeated cycling and elevated temperatures cause instability in this layer, which leads to uncontrolled growth. This uncontrolled growth of the SEI is a major contributor to capacity fade in a battery and can also potentially lead to catastrophic failure due to short circuit.

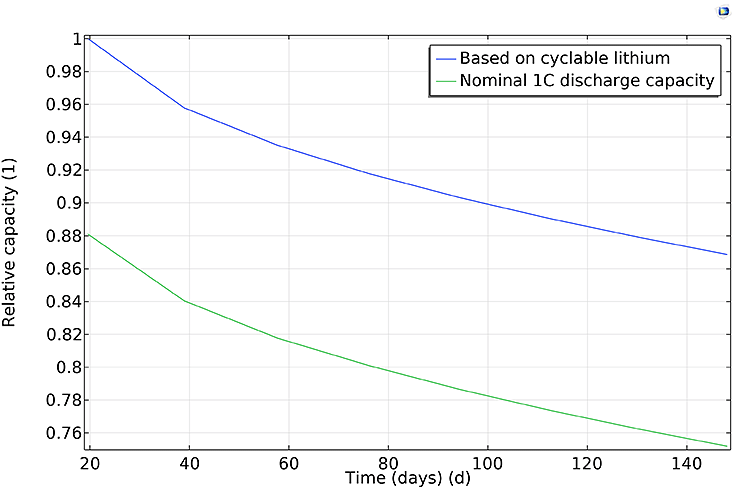

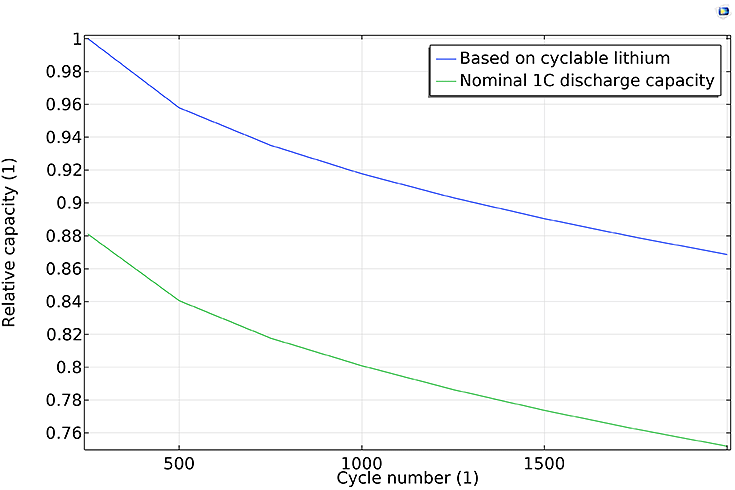

Figure 2(a): Capacity versus total accumulated cycle time;

Figurer 2(b): Capacity versus cycle number, for total amount of cyclable Li and nominal 1C discharge.

Modeling capacity fade

Experiments, while indispensable, are costly and time consuming, which makes them impractical and inadequate when it comes to understanding capacity fade. Besides, the physical insights that electrochemical and mathematical modeling affords are often difficult to get even with experimentation. Therefore, modeling and simulation can be employed to design experiments as well as to evaluate the results from experiments, so that only the experiments needed to validate the models are done.

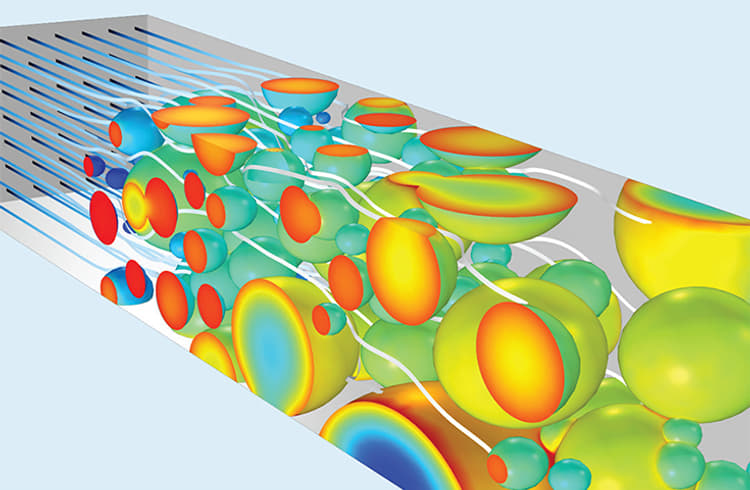

Modeling and simulation can provide pertinent insights into the inner working of a cell. Mechanisms such as lithium plating, particle cracking, and electrolyte decomposition can be accurately described in multi-physics models. One can understand the relative importance of phenomena such as SEI layer growth, Li inventory, and which factorsaffect capacity fade, thereby developing strategies to mitigate capacity fade for given drive cycles.

As an example, Figure 2 above shows the relative capacity versus time and cycle number, respectively. Both the capacity based on the amount of cyclable lithium and the nominal 1C discharge capacity decrease continuously. A higher fade rate is observed during the initial cycles, and both capacities decay similarly: about 20% during the 2000 cycles of the study, indicating that the main contributor to the 1C discharge capacity fade is the loss of lithium, not increased film resistance due to the SEI layer growth.

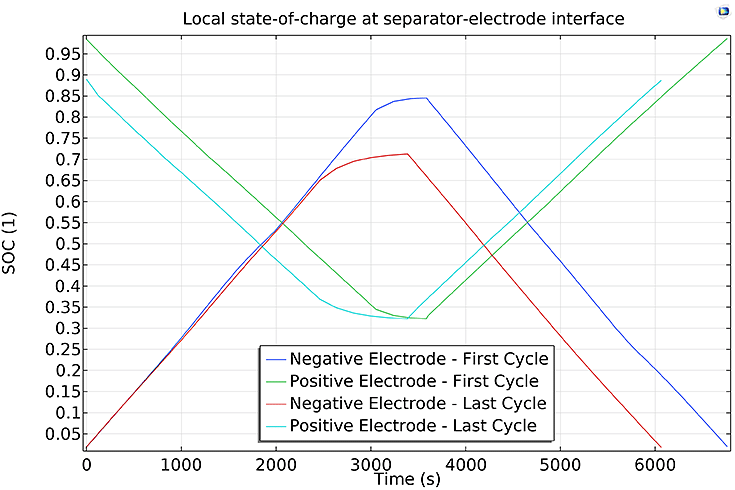

Figure 3: Local SOC on the separator electrode boundaries.

Figure 3 shows the local SOC at both the cathode and anode for the first and last cycles of the study. It can be seen that during discharge, the anode displays a relatively greater capacity fade than the cathode. Using such information, battery users can decide which cooling strategy to use for their packs, and predict battery hotspots for different operating conditions, such as SOC and charge and discharge rates, thereby minimising capacity fade.

In cases where a full analysis of the electrochemistry and physics phenomena may not be possible, for example in system models or in onboard models, simpler mathematical models, usually referred to as lumped models,can be used. These models can be based on validated multi-physics models and, by receiving measured data and performing parameter estimations, they are able to predict capacity fade and the reason for this fade. Such models are completely dependent on the range of validation used in their development. Within this range of calendar and cycle life data, a lumped model can quickly provide useful insights for capacity fade at both the cell and pack level.

In summary, modeling and simulation can provide valuable insights to better understand capacity fade. High-fidelity multi-physics modeling further provides descriptions at the microscopic level, which are required for battery manufacturers and battery users to understand the impact of temperature, SOC, voltage and current density during charge and recharge,numbers of cycles,and other operating conditions that may affect capacity fade.

RELATED ARTICLES

Bosch’s India Recast

Why the Tier-1 giant’s dealmaking is really a play for control of the next mobility stack.

How One Tax Cut Fuelled Every Car Maker Except MG Motor

For the one OEM built around EVs, the competitive equation changed without its own pricing moving by a rupee.

How a Single GST Cut Shifted India's Car Market Out of Neutral

Five million passenger vehicles were never a supply problem. One rate reform proved it was always about price.

Follow us

Follow us

03 May 2021

03 May 2021

7585 Views

7585 Views

Shahkar Abidi

Shahkar Abidi

Anurag Chaturvedi

Anurag Chaturvedi